Data Sovereignty & AI: Enterprise DWH

How do you keep your data under control when AI models need access to everything? This webinar shows how to combine data governance with modern AI capabilities.

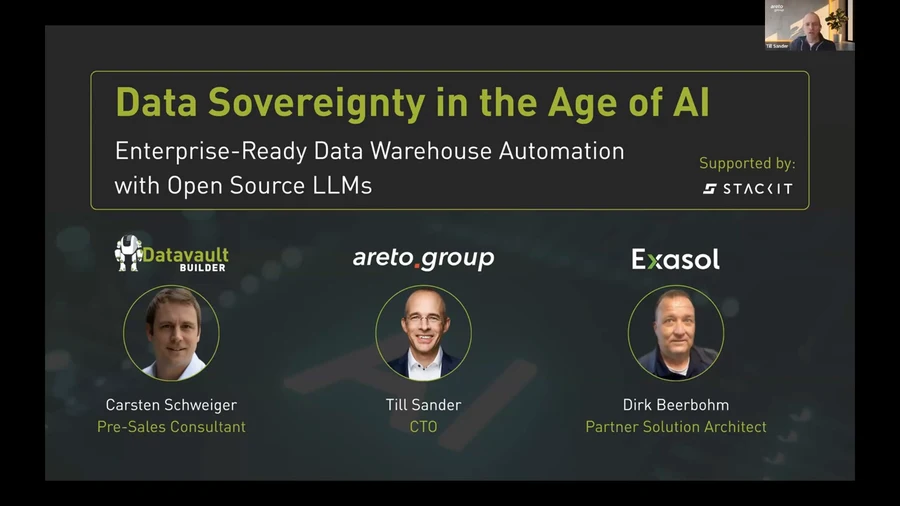

AI transforms what enterprise data platforms need to deliver. But as models demand access to more data, a critical question emerges: how do you build AI capabilities without giving up control over your data? In this webinar, Carsten Schweiger (Pre-Sales Consultant, Datavault Builder), Till Sander (CTO, areto.group) and Dirk Beerbohm (Partner Solution Architect, Exasol) tackle data sovereignty head-on — and show how open source LLMs, a governed data warehouse, and high-performance analytics can work together.

Supported by STACKIT — the European sovereign cloud.

Why data sovereignty matters now

AI models are general-purpose technologies — the third major technological era of exponential growth, after the book (knowledge) and steam (value). Till Sander opens with this historical framing: every era of exponential growth has fundamentally reshaped society, and the AI era is no different.

The problem: most AI implementations default to hyperscaler models (OpenAI, Google, Anthropic) where your data leaves your environment for inference. For enterprises in regulated industries — banking, healthcare, public sector — this is a fundamental blocker. Open source LLMs change that equation. Models like Llama or Mistral can run entirely within your own infrastructure, on sovereign cloud or on-premises, with no data leaving your control.

The three-layer answer: DWH automation + Exasol + open source LLMs

The webinar demonstrates a reference architecture combining three components:

1. Datavault Builder — governed data foundation Datavault Builder builds the enterprise data warehouse that feeds everything downstream. The model-driven approach guarantees automatic lineage, audit trails, and documentation — the governance layer that makes AI outputs trustworthy. Business users get clean, context-enriched data products in the Gold layer, ready for both BI and AI workloads.

2. Exasol — in-memory analytics engine Exasol provides the high-performance query layer that sits between the data warehouse and the AI models. Its in-memory architecture handles the analytical workloads that LLMs need for grounding without the latency issues that plague traditional columnar databases. The combination with Datavault Builder’s structured output layers means queries run against clean, documented data — not raw staging data.

3. Open source LLMs on STACKIT STACKIT provides the sovereign European cloud infrastructure. Open source LLMs (running as inference endpoints within the sovereign environment) can query the Exasol layer via natural language — asking questions, generating reports, and feeding agentic workflows — without the data ever leaving the European regulatory perimeter.

What this means for AI governance

The key architectural principle: AI works with the data you already govern. There is no separate AI data lake, no shadow copy, no new pipeline to maintain. The same Data Vault model that powers your BI reports also powers your AI queries. This means:

- Every AI output is traceable to a source table via automatic lineage

- Business rules applied in the Business Vault layer apply equally to AI queries

- GDPR compliance, data residency, and access controls are inherited — not bolted on

- Open source models can be updated, audited, and replaced without vendor lock-in

Who this is for

This architecture is most relevant for:

- Regulated industries (banking, insurance, healthcare, public sector) where data cannot leave the EU or must remain under the organisation’s control

- Enterprises already running Datavault Builder who want to extend their data platform into AI without rebuilding the governance layer

- Data teams evaluating sovereign AI who need a concrete reference architecture, not just a vendor pitch

Interested in seeing how this works for your data environment? Book a free 20-minute demo — we’ll walk through the architecture relevant to your use case.